Invited speaker at The Skin of Things online art-science conference

Invited speaker at The Skin of Things online art-science conference

Abstract

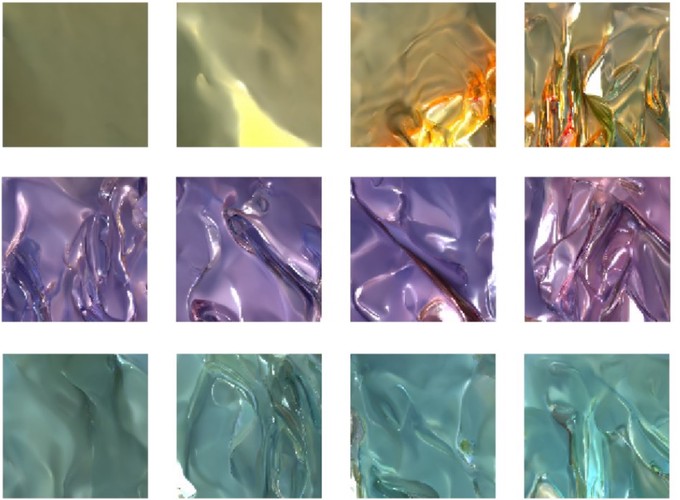

A photograph or painting of a glazed vase might consist of irregularly-shaped bright patches, small white dots, and large low-contrast gradients—yet we immediately see these as reflections on the glossy surface, sharp highlights, and the smooth shading of a rounded object. How are we able to disentangle complex interacting factors like 3D shape, lighting and surface reflectance to perceive individual physical quantities, like how glossy a surface is? To make matters more difficult, the brain must solve this problem based on visual experience alone, since we are never told the true reflectance values of surfaces from which we might learn. We approached the question by using a 3D rendering engine to render tens of thousands of images of simple visual scenes (bumpy surfaces with different material properties, seen under diverse lighting conditions), and then training artificial visual systems (deep neural networks) to generate new images that followed the same ‘rules.’ This is an ‘unsupervised learning’ goal; the network learns high-level statistical regularities in images, that allow it to create entirely novel images that look like plausible real surfaces, without ever being told about physical properties. We found that, in their internal representations, the unsupervised networks spontaneously clustered images by physical properties like reflectance and illumination, despite receiving no explicit information about them. Most intriguingly, their representations also predict specific patterns of ‘successes’ and ‘errors’ in human perception. The unsupervised networks predict human gloss perception better than ground truth, supervised networks, or various baseline models, and can predict, on an image-by-image basis, illusions of gloss perception caused by interactions between material, shape, and lighting. We suggest that the brain learns about materials—and perhaps many other properties of the world!—by learning the ways in which images vary, within and across our moment-to-moment visual experience.